Standing on the brink of a technological revolution, industry experts anticipate a profound transformation in a significant portion of global software, with AI and machine learning (ML) at their core. According to PwC forecasts, by 2030, the global economy will witness an astonishing $15.7 trillion contribution from AI, resulting in a remarkable 14% increase in global GDP. The continual evolution of databases and identity management, coupled with AI, is solidifying intelligence as the cornerstone of contemporary software applications.

From cloud computing to networking, ML is revolutionizing our approach to essential elements of software infrastructure. Web3, representing the decentralized and open evolution of the World Wide Web, is no exception to this paradigm shift. As Web3 progressively integrates into mainstream usage, machine learning is positioned to play a pivotal role in advancing AI-centric Web3 technologies.

However, the infusion of AI in Web3 comes with its set of technical challenges and impediments. To unlock the full potential of AI within Web3, it is imperative to identify and surmount the obstacles hindering this convergence. Historically, centralization has been intrinsic to AI solutions, but as we navigate the decentralized realm of Web3, a critical question arises: How can AI adapt and thrive in this novel landscape, shedding its conventional centralization tendencies?

This article embarks on an exploratory journey, delving into the intricacies of the role of AI in Web3 ecosystem. It will discuss the challenges and opportunities on the horizon, shedding light on the complexities involved in the integration of AI with Web3 technologies.

So, without any further ado, let’s get started!

What Exactly is Web3?

Web3 represents the evolution of the internet, envisioning a decentralized, secure, and user-centric digital ecosystem that prioritizes sharing power and benefits. It marks a departure from the dominance of a few major tech companies, aiming to grant users greater control over their data and ensure enhanced privacy without censorship. Although there is no standardized definition for Web3, its key features include decentralization, permissionless and trustless interactions, and integration with cutting-edge technologies like artificial intelligence (AI) and machine learning (ML).

Decentralization lies at the core of AI and Web3, leveraging blockchain technology to store information across a network instead of relying on unique web addresses. This shift empowers users with more control over vast databases currently held by internet giants. In the Web3 era, users have the ability to sell the data generated from various computing resources, maintaining autonomy over their data.

Web3 operates on open-source software and embraces decentralization, with decentralized applications (dApps) running on blockchains, ensuring a permissionless and trustless environment. The integration of AI and ML is a crucial aspect of Web3, incorporating Semantic Web concepts and natural language processing. This approach allows computers to comprehend information akin to human understanding, facilitating advancements in areas such as drug development. The utilization of machine learning in Web3 enhances computational capabilities, enabling computers to deliver faster and more relevant results.

Moreover, Web3 fosters enhanced connectivity, creating a landscape where information and content are intricately linked and accessible across multiple applications. The proliferation of internet-connected devices, coupled with the Internet of Things (IoT), further contributes to this interconnected digital ecosystem. As Web3 continues to unfold, the amalgamation of decentralization, AI, and ML promises a transformative paradigm in the way we interact with and leverage the capabilities of the internet.

What is AI?

Artificial intelligence (AI) involves computers simulating human intelligence, encompassing applications such as expert systems, natural language processing (NLP), speech recognition, and computer vision. Utilizing specialized hardware and software, AI is capable of developing and training machine learning algorithms. These systems operate by processing substantial amounts of labeled data and identifying patterns and correlations to make predictions about future states.

For instance, training a chatbot involves exposing it to text chat examples to learn how to engage in real-life conversations. Similarly, image recognition tools learn to identify objects in images through exposure to vast datasets. AI programming revolves around three cognitive skills: reasoning, learning, and self-correction. There are two primary types of artificial intelligence:

1. Strong AI:

- Complex systems are capable of performing tasks resembling human activities.

- Programmed to solve problems autonomously, without human intervention.

- Examples include self-driving cars and advanced medical operating rooms.

2. Weak AI:

- Designed for specific tasks.

- Examples include video games and personal assistants like Siri and Amazon’s Alexa, which answer questions by engaging in dialogues.

Thus, the integration of artificial intelligence in Web3 introduces a new dimension, offering enhanced capabilities for decentralized, secure, and user-centric digital ecosystems.

The Role of AI in Web3: Transforming Dynamics from Centralization to Empowerment

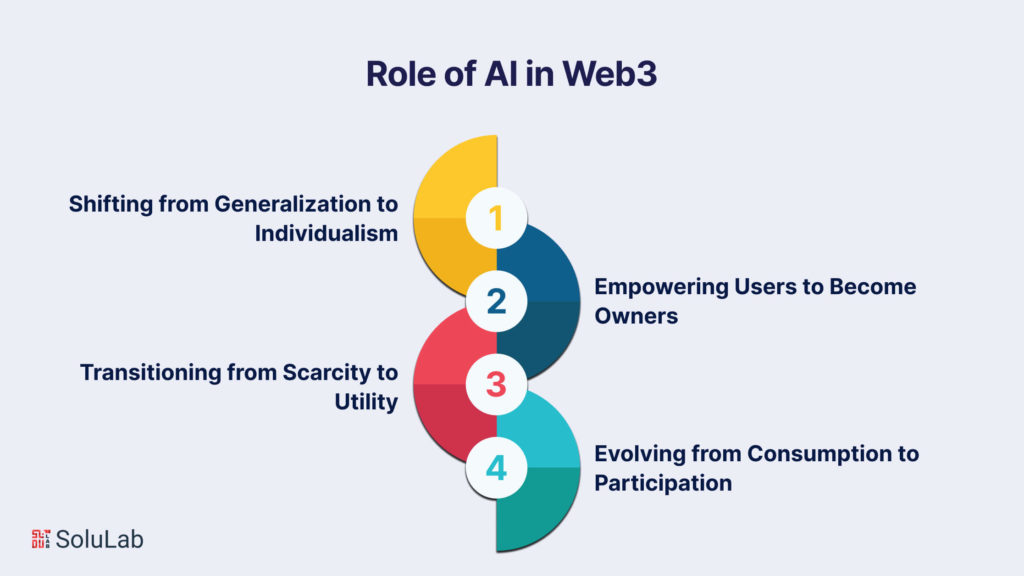

In this decentralized landscape, the empowerment facilitated by AI in Web3 transcends traditional boundaries, fostering a democratized digital environment. By prioritizing individualism, Web3 ensures that the benefits of AI are not concentrated in the hands of a select few but are accessible to a diverse array of creators and users. This shift heralds a new era where the ownership of data becomes a fundamental right, content creators reclaim their due recognition and compensation, and collaborative networks redefine the relationship between creators and their audiences. As the dynamics evolve from centralized control to user empowerment, Web3 emerges as a catalyst for a more inclusive, equitable, and participatory digital ecosystem.

Now, let’s have a look at the role of AI in Web3 in the following areas:

1. Shifting from Generalization to Individualism: Traditional models of centralized AI have predominantly catered to a generalized user base, often favoring the privileged few. In the Web3 era, the focus is on elevating AI capabilities to serve all individuals, transcending wealth disparities. Each AI model is now uniquely trained on the creator’s personal knowledge, passions, and experiences, fostering a more inclusive and personalized approach.

2. Empowering Users to Become Owners: Historically, a limited number of private entities have controlled and profited from the content generated, leaving creators marginalized and under-compensated. Web3 disrupts this paradigm by granting creators full control over their data, AI models, and digital assets. Through blockchain-based platforms, creators gain exclusive access and authority over their data, allowing them to repurpose and share it as they see fit.

3. Transitioning from Scarcity to Utility: In Web3, the mere presence of tokens is no longer sufficient for user ownership and incentives. The emphasis is on ensuring that tokens hold tangible value for users. Personal AI takes center stage, creating and unlocking new value from the content, creativity, and intellect contributed by users. This approach transforms tokens into meaningful tools, fostering collaborations and generating value for both individuals and their communities through the utilization of social tokens.

4. Evolving from Consumption to Participation: Current platforms predominantly cater to mass consumption, creating a one-way flow where creators produce content and audiences consume it. Web3 introduces a paradigm shift where creators and their communities possess their own platforms, empowered by personal AIs and distinctive methods of exchanging value through social tokens. This revolutionary approach establishes a new architectural framework for collaborative networks, redistributing power from platforms to individuals and reshaping the dynamics between value consumption and creation.

AI’s Role in Shaping Web3 Intelligence Layers

Machine learning (ML) stands as a fundamental element of artificial intelligence (AI). The role of AI in Web3 is in shaping multiple layers of intelligence. The incorporation of ML into Web3 extends across various key layers of the Web3 stack, resulting in the emergence of ML-driven insights at critical junctures.

1. Intelligent Blockchains:

- Traditional blockchain platforms have primarily focused on the decentralized processing of financial transactions. However, the evolution of Web3 introduces a new era where ML becomes integral to blockchain capabilities.

- Next-generation layer 1 and layer 2 blockchains will leverage ML-driven functionalities. For instance, a blockchain runtime may employ ML predictions for transactions, enhancing the scalability of consensus protocols.

- AI’s contribution to blockchain security is notable, with the ability to swiftly mine data, predict behavior, detect fraudulent activities, and prevent attacks.

2. Intelligent Protocols:

- ML capabilities are seamlessly integrated into the Web3 stack through smart contracts and protocols, exemplified prominently in the decentralized finance (DeFi) space.

- DeFi platforms are evolving towards computerized market makers (AMMs) and lending protocols with intelligent logic based on ML models. Imagine lending protocols utilizing intelligent scoring systems to balance loans from diverse wallet types.

3. Intelligent dApps:

- Decentralized applications (dApps) within Web3 are anticipated to be key vehicles for incorporating ML-driven features rapidly.

- This trend is evident in non-fungible tokens (NFTs), where the next generation is envisioned to transcend static images. Future NFTs may exhibit intelligent behavior, adapting to the profile and preferences of their owners.

The interplay between AI and Web3 manifested in these layers of intelligence, showcases the transformative potential of ML in enhancing the capabilities and functionalities of decentralized digital ecosystems.

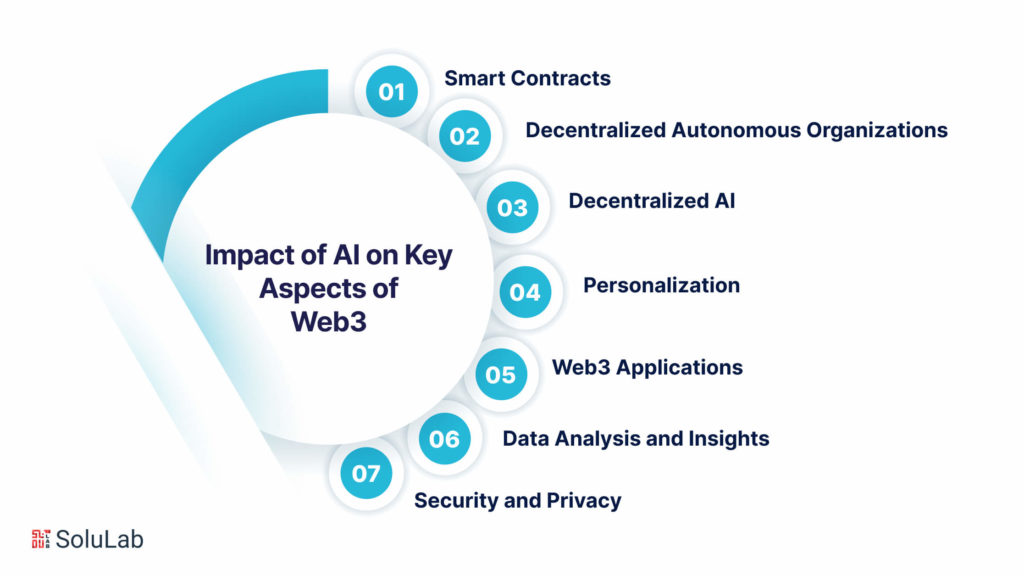

The Transformative Impact of AI on Key Aspects of Web3

Artificial intelligence (AI) is a driving force in shaping the evolution of Web3, ushering in a decentralized, secure, and user-centric Internet experience. Its integration into various facets of Web3 promises to deliver heightened intelligence, efficiency, and personalized digital interactions. Now, let’s have a look at some of the applications of AI in Web3:

1. Smart Contracts

- AI’s role in smart contracts extends beyond traditional functionalities. It introduces advanced decision-making capabilities, enabling dynamic and intelligent transactions on blockchain-based decentralized platforms.

- Smart contracts, inherently self-executing, can be enhanced with AI for complex decision-making processes, incorporating data analysis, pattern recognition, and predictions. This integration enables adaptable responses to changing conditions and more intelligent transaction executions.

2. Decentralized Autonomous Organizations (DAOs)

- Web3 AI significantly enhances governance and decision-making within DAOs, organizations governed by blockchain-encoded rules. Integration of AI streamlines decision-making by automating data analysis, pattern recognition, and historical outcome assessments.

- By leveraging machine learning, AI aids in identifying relevant proposals, predicting their impact, and prioritizing them for member consideration. This enhances the transparency, efficiency, and adaptability of DAOs in the rapidly evolving Web3 landscape.

3. Decentralized AI

- The fusion of AI with decentralized technologies, such as blockchain and distributed computing, defines decentralized AI. Leveraging decentralized resources and data storage, it introduces enhanced privacy, security, and reduced reliance on centralized entities.

- Distributed model training ensures data privacy by training models on individual devices, and collaborative model development enables secure collaboration without sharing sensitive data. Incentive mechanisms, facilitated by decentralized AI, reward participants for contributing data, computing resources, or expertise.

4. Personalization

- In the Web3 context, AI plays a pivotal role in elevating personalization, offering users more engaging and tailored experiences.

- By analyzing user data, AI algorithms customize content, recommendations, and services based on individual preferences. Techniques like collaborative filtering and content-based filtering contribute to generating personalized recommendations and interfaces.

5. Web3 Applications

- Natural Language Processing (NLP) within AI influences seamless communication between users and Web3 applications, enabling intuitive interfaces and bridging the gap between human language and digital services.

- NLP enhances user interactions, facilitates context-aware communication, and automates content generation. It empowers Web3 applications to understand and respond to user queries in natural language, fostering user-friendly and accessible interfaces.

6. Data Analysis and Insights

- AI-driven data analysis within Web3 processes vast datasets generated by decentralized platforms. Machine learning, deep learning, and NLP uncover patterns, correlations, and trends, providing actionable insights.

- These insights inform the development and optimization of Web3 applications, identifying bottlenecks, inefficiencies, and opportunities for innovation. AI enhances security and trust by proactively addressing vulnerabilities and malicious activities.

7. Security and Privacy

- AI contributes significantly to security and privacy within Web3 by detecting and preventing cyber threats. Machine learning algorithms identify abnormal patterns and potential vulnerabilities, ensuring the integrity of services.

- Advanced authentication methods, such as biometric recognition, bolster security. AI-driven encryption and anonymization techniques safeguard user data, preserving privacy in decentralized environments.

The synergy of AI in Web3 reshapes the digital landscape, offering a glimpse into a future where intelligence, security, and personalization converge to redefine user experiences in a decentralized, user-centric internet.

Why is Web3 Adopting ML Technology From the Top Down?

The top-down adoption of machine learning (ML) technologies in Web3 is driven by the intricate nature of the underlying infrastructure and the requirement for specialized knowledge in integrating ML solutions with decentralized systems. In this context, top-down adoption refers to the development and implementation of ML technologies by experts and organizations deeply acquainted with Web3, preceding widespread adoption by the general user base.

Several factors contribute to the top-down adoption pattern of ML technologies in Web3:

- Technical Complexity: The integration of ML technologies into Web3 platforms demands a comprehensive understanding of both decentralized infrastructure and ML algorithms. The intricate nature of underlying systems, such as blockchain, smart contracts, and decentralized applications, necessitates expertise for the seamless integration of ML solutions.

- Security and Privacy Concerns: Web3 aims to provide secure and privacy-preserving solutions. Careful incorporation of ML technologies into Web3 is essential to uphold these goals. Top-down adoption allows experts and organizations with a deep grasp of security and privacy implications to design and implement ML solutions in alignment with Web3’s core principles.

- Standardization and Interoperability: Effective adoption of ML technologies across Web3 platforms requires the achievement of standardization and interoperability. Top-down adoption facilitates the development of common frameworks, protocols, and standards, streamlining the integration of ML solutions into the Web3 ecosystem. This promotes a unified approach, reducing fragmentation and fostering collaboration among stakeholders.

- Scalability and Performance: Implementation of ML technologies within Web3 necessitates addressing challenges related to scalability and performance, crucial aspects of decentralized systems. Top-down adoption ensures that ML solutions are designed and optimized to meet these challenges, resulting in more efficient and scalable implementations that better serve the Web3 community.

- Ecosystem Growth and Maturity: The Web3 ecosystem is still evolving, with technologies, platforms, and applications continuously developing. A top-down approach allows for the gradual adoption of ML technologies as the ecosystem matures. This ensures their introduction in a manner that aligns with the growth and evolving needs of the Web3 community.

Future Trends of AI in Web3

As we step into the era of Web3, characterized by decentralized technologies, blockchain, and user-centric applications, the integration of artificial intelligence (AI) is poised to play a pivotal role in shaping the landscape. The convergence of AI and Web3 not only promises enhanced user experiences but also addresses the challenges associated with decentralization. Several emerging trends indicate the trajectory of AI in Web3, hinting at a future where intelligent algorithms seamlessly interact with decentralized systems.

1. Decentralized AI Networks (DAINs)

The rise of decentralized AI networks (DAINs) marks a paradigm shift in the way AI algorithms are developed and deployed. These networks leverage blockchain technology to facilitate decentralized training and execution of AI models. By distributing computation across a network of nodes, DAINs offer increased security, transparency, and resilience. This trend aligns with the core principles of Web3, empowering users to participate in AI model training and contribute to the network’s overall intelligence.

2. AI-powered Smart Contracts

Smart contracts, a cornerstone of blockchain technology, are evolving with the integration of AI capabilities. In Web3, smart contracts are expected to become more intelligent and dynamic, adapting to changing conditions and learning from user interactions. AI-powered smart contracts can optimize decision-making processes, automate complex tasks, and dynamically adjust contract terms based on real-world events, creating a more flexible and responsive decentralized ecosystem.

3. AI-driven Personalization

Web3 applications are expected to leverage AI for hyper-personalization, tailoring user experiences based on individual preferences and behaviors. As users engage with decentralized platforms, AI algorithms will analyze data patterns, providing personalized recommendations, content, and services. This personalized approach not only enhances user satisfaction but also fosters a more user-centric and engaging Web3 environment.

4. Trustworthy Oracles with AI Integration

Oracles act as bridges between blockchain networks and real-world data. Integrating AI into these oracles enhances their reliability by enabling them to verify and validate information autonomously. AI-powered oracles can dynamically adapt to changing data sources, improving the accuracy and trustworthiness of the information fed into smart contracts. This trend is crucial for ensuring the integrity of decentralized applications that rely on external data.

5. AI for Decentralized Identity and Security

Decentralized identity solutions are gaining prominence in Web3, and AI plays a crucial role in enhancing the security and usability of these systems. AI algorithms can analyze user behavior patterns, biometric data, and contextual information to strengthen identity verification processes. This not only mitigates security risks but also ensures a seamless and user-friendly decentralized identity experience.

6. Community-driven AI Governance

AI models in Web3 are increasingly being governed by decentralized autonomous organizations (DAOs). Community-driven governance models empower users to participate in decision-making processes related to AI development, deployment, and upgrades. This trend promotes inclusivity, and transparency, and ensures that AI systems align with the values and preferences of the Web3 community.

Final Words

With the potential to exert profound influence on various facets of the digital landscape, the ramifications of AI in Web3 are momentous. As we delve deeper into comprehending the applications and implications of AI within the Web3 ecosystem, we anticipate witnessing noteworthy progress and pioneering innovations in the foreseeable future. In an era where businesses and individuals increasingly depend on AI-generated content to amplify productivity and efficiency, a nuanced understanding of the challenges inherent in this technology becomes paramount. In the preceding sections, we have probed into the potential risks associated with AI-generated content and proposed strategies to mitigate these concerns.

While some of the suggested remedies may appear visionary, it is essential to clarify that our objective is not to prescribe a definitive roadmap but rather to illuminate potential issues that may emerge as the synergy between AI and Web3 technologies intensifies. The proposed solutions presented herein are neither exhaustive nor fully matured; instead, they serve as a springboard for ideation and further exploration. By fostering discussions around these concepts, our aim is to stimulate critical thinking and instigate conversations concerning the challenges linked to AI-generated content within the realm of Web3.

Embarking on this collective journey, it is crucial to acknowledge that the potency of AI extends beyond merely propelling business success; it encompasses the capacity to permeate all facets of our lives. Nurturing a culture of open dialogue, mutual understanding, and collaborative problem-solving is essential to navigating the challenges and opportunities entwined with AI-generated content in the context of Web3. In this rapidly evolving technological frontier, the need of the hour is to embrace the excitement and work collaboratively to ensure the responsible and effective harnessing of AI benefits in the Web3 ecosystem.

SoluLab emerges as a trailblazer in advancing the integration of AI within the Web3 landscape, offering specialized expertise in blockchain and artificial intelligence. With a commitment to driving innovation, SoluLab empowers businesses to navigate the intricacies of decentralized technologies, providing tailored Web3 development solutions that seamlessly integrate AI capabilities into Web3 applications. From enhancing user experiences to optimizing smart contracts, SoluLab’s comprehensive approach ensures the secure and efficient operation of decentralized networks. For enterprises seeking to harness the transformative potential of AI in Web3, partnering with SoluLab signifies a strategic leap into the future. Ready to revolutionize your digital presence? Connect with SoluLab today and embark on a journey towards a more intelligent, decentralized future.

FAQs

1. What is Web3, and how does AI fit into this paradigm?

Web3 represents the next evolution of the internet, characterized by decentralized technologies like blockchain. Artificial Intelligence (AI) plays a crucial role in Web3 by enhancing user experiences, optimizing smart contracts, and contributing to the overall intelligence of decentralized networks.

2. How does SoluLab integrate AI into Web3 applications?

SoluLab, as a technology consulting firm, specializes in seamlessly integrating AI capabilities into Web3 applications. Leveraging expertise in both blockchain and artificial intelligence, SoluLab tailors solutions to enhance user experiences, optimize smart contracts, and ensure the secure and efficient operation of decentralized networks.

3. What challenges are associated with AI-generated content in Web3?

Challenges with AI-generated content in Web3 include security and transparency concerns. SoluLab addresses these by offering innovative solutions that prioritize security, privacy, and responsible AI practices, fostering a more secure and privacy-preserving digital environment.

4. How can businesses benefit from the integration of AI and Web3?

Businesses can benefit from enhanced user experiences, optimized processes, and increased efficiency through the integration of AI and Web3. SoluLab stands as a strategic partner with its deep understanding of both technologies, offering tailored solutions to help businesses harness the full transformative potential of AI within the Web3 ecosystem.

5. Are solutions for AI-generated content in Web3 fully developed, and how does SoluLab address the challenges?

The proposed solutions presented are not fully matured but serve as a starting point for further exploration and ideation. SoluLab takes an adaptive and collaborative approach, fostering open dialogue and critical thinking to collectively address the evolving challenges associated with AI-generated content in the dynamic context of Web3.